extremely online #4: how public defenders use cops' own tools against them

TL;DR: This is Extremely Online, a tech accountability round-up by Julie Lee. It's for people who care about tech and AI accountability, bringing you original content, upcoming events and job and funding opportunities.

New here? I'm an investigative data journalist that writes about tech and AI. I have been a Technology Fellow at the ACLU of Massachusetts, research intern at the Surveillance Technology Oversight Project and Public Voices Fellow on Technology in the Public Interest. I’ve written stories on how civil asset forfeiture funded robot police dogs, the largest analysis to date of ShotSpotter’s efficacy in Boston, Kickstarter United’s historic fight for a four day work week, and that sad time Evernote killed its free tier. I've also worked on projects about Flock, automated license plate readers and location data brokers that haven't made it onto the internet but that I'm hoping can inform future editions. Oh yeah, and I have a Ph.D. in neuroscience, for some reason.

More exciting content lined up for future issues, so please subscribe!

For this latest issue, I'm excited to share a Q&A with Jerome Greco, Digital Forensic Director at the Legal Aid Society. The Digital Forensic Unit analyzes forensic tools and digital evidence in service of their clients, including using the same tech as the cops to extract data, with consent, from their clients' devices, such as to support an alibi. The unit is also involved in policy and advocacy, as well as educating other attorneys and the public about surveillance tech.

Greco started as a staff attorney at Legal Aid in 2011 and saw an opportunity to bring together analysts and public defenders across the organization. Since then, the team under his leadership has grown to 13.

I first came across the Digital Forensic Unit through their great newsletter, Decrypting a Defense. It sounded like a fascinating corner of the tech accountability space, so I reached out to learn more.

The conversation below was lightly edited and condensed for clarity.

How would you define digital forensics?

Jerome Greco: Traditionally, digital forensics is the field of finding and preserving digital evidence in a forensically sound way so that it can be used in court proceedings or any sort of official proceeding or investigation. My unit has a broader definition, for which we also include electronic surveillance, so things like facial recognition and ShotSpotter.

What kind of tools do you use for your work?

JG: We use open source tools whenever possible, but realistically, there are a lot of tools for which there's not an equivalent, particularly for phone extractions. So, at least for the traditional digital forensic stuff, we use a lot of the same tools as police departments and prosecutor offices because one, it helps put us on an even playing field, and two, we get to definitively say that we know how it works and whether or not they're telling the truth. There are definitely times they have made a claim about how the software works or what it can’t do and we were able to show that we literally are using the same thing and can do what you're saying we can't.

What kind of training do you do in communities?

JG: A lot of times it's telling people that this technology exists. Sometimes people aren't even aware it’s happening or they've heard it, but they don't actually know what that means. We actually get to see how it plays out in a courtroom and how prosecutors and police are going to use it, how judges are going to react. We can provide redacted examples to people so they can see that this is not a hypothetical issue, this is how it actually is being used.

Just as an example, people know the NYPD use facial recognition. But we know what their actual process is and how it differs from what they've publicly provided. For example, a detective working on a case will submit either a video or still image to their facial identification section. They will run it through their photo manager facial recognition system from DataWorks Plus and will get approximately 250 possible matches from the image they submit to the system. Then the detective in the unit looks at those and says, I think this is the one that most looks like the probe image. He just makes his own decision based on looking at it as if he's some sort of expert or that this isn't already a highly suggestive situation.

Then they will look to see if there's any reason that person couldn't have committed a crime, for example, if they're currently incarcerated or hospitalized. Whatever they end up choosing, they send a possible match lead report to the the case detective, identifying this person as a possible suspect. It's not enough for probable cause, but now you should go investigate this person.

Meanwhile, that detective generally has no idea. They know facial recognition was used, but they have no idea what that process was. They don't see all these other possible candidates. They just get this one person that was selected.

What they used to do is put the person in a photo array and see if a witness could ID him. But what they've started doing is even worse, which is see which officer or detective had recent contact with that person, and they send that person an email with either the video or the probe image and say, do you recognize this person?

Which, of course, that officer thinks, why are they reaching out to me? Oh, it must be because they already know I have some interaction with this person. Let me think about who I previously arrested who looks like this person, as if this person I arrested months ago for some trivial thing I just remembered out of the hundreds of arrests I've made since. And so, it's bullshit. That doesn't even account for the images that they actually manipulate.

When you say manipulate, what do you mean?

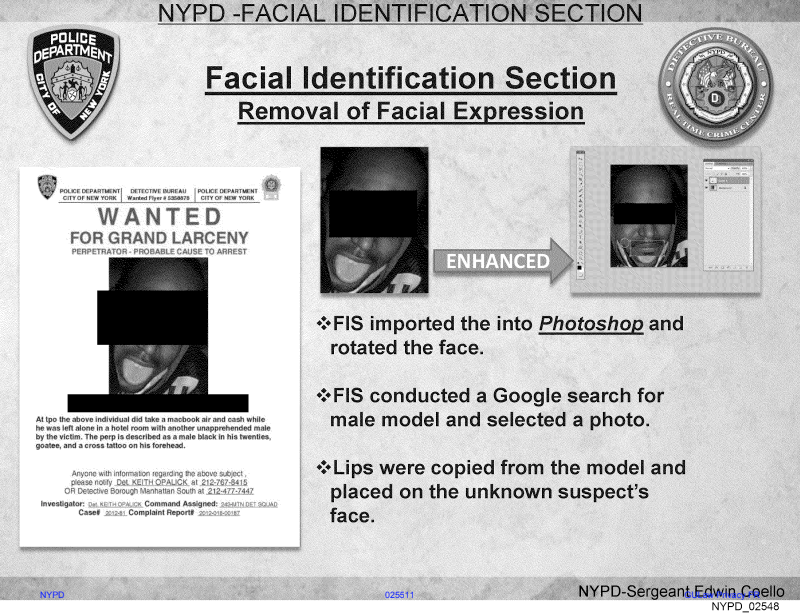

JG: There are a number of things that their system has problems dealing with. So for example, if your eyes are closed or covered, it has trouble recognizing that there's a face. Similarly, if your mouth is open or covered. There are documented cases in which they have photoshopped eyes and mouth on people. Which literally seems like tampering with evidence from my standpoint.

There's a very famous example in which they had a man whose mouth was open in the picture and they literally googled black male model lips and took a photo that they found from that Google search and photoshopped the lips onto this person to get an ID.

There's another example where they ran the person’s photo but couldn't find any match or it wasn't a high enough quality picture to use and they said to themselves, this guy looks like Woody Harrelson.

Another thing is if the picture is only from one side of somebody's face, they'll mirror it, which of course is completely wrong because even the most attractive people in the world don't have perfectly symmetrical faces. The vast majority of the population, me included, definitely do not.

How do you end up making quite technical issues understandable for presumably mostly non-technical audiences in the courtroom?

JG: Analogies can be useful. Another thing is to have experts. And when possible, we try to use something visual. When I first started working, [law enforcement] would get search warrants for phones and they would copy and look at everything basically without any restriction. It still happens but it was much worse at the time. Defense attorneys started challenging that, and the DA's office would come back and say, well, it's not possible for us to limit.

Then I started including screenshots showing exactly where the features and settings were that they could limit. And I think for a number of judges that made it a little easier for them because [the DA’s office] couldn't explain that. They would come back and say, well, it doesn't exactly work that way. And the judge would be like, well, you told me it didn't exist.

What's your favorite analogy for [insert technology here]?

JG: For example, for ShotSpotter, they'll say, well, the ShotSpotter alert said that there was a shot in this area and we saw these individuals running from that area and that gave the cops cause to stop them. We want to challenge the scientific reliability of it and the judge will be like, well what does it matter?

And my response is, well, right now they're telling you a magic button told you that there was a shot fired in this area and that was the justification to stop my client. And so if they called it a magic button, I think none of us would question that there should be some discussion about its reliability. But because they give it a brand name and a PR team, then all of a sudden we're all like, oh, it's totally reliable, even though we haven't actually done anything to prove that. And so, you know, if we're all okay with the magic button, then sure, let's not go forward, but otherwise, I think we'd have a hearing, right?

What do you wish people knew about the technologies that cops have at their disposal?

JG: We sometimes get questions like, can [the police] get this off a phone? But a lot of it may depend on your operating system, your settings, what version of the forensic software is being run, because they're constantly making updates. Every time, Apple updates iOS or WhatsApp puts out a new version. It is constantly changing.

Then, more broadly, with the less traditional digital forensics – like ShotSpotter, video surveillance, facial recognition and phone tracking – with all that data and being able to cross-reference it with each other and pulled up at any point, there's such an intimate picture you can get of so many people's lives, including people who have done nothing wrong, who weren't suspects, at least not at the time that data was collected.

People are starting to become more aware of that, as they're seeing that proliferate into the general tech world, particularly with using their data to train AI. But I think if people really understood just how much is available and how much is being used and with how little regulation and transparency, they would be even more horrified than they already are.

I was actually surprised to see how much backlash there was against that Ring ad during the Super Bowl. It seems like people care more than they used to.

JG: Yeah, I definitely was concerned that people were going to be like, oh, this is a cool feature, which I think was Amazon's intent. There's a reason why they made it about cute animals being lost. So I was pleasantly surprised to see that there was a stronger backlash than I expected and from people who I don't think, until maybe recently, were paying as close of attention to those issues.

I'm hoping that is a continual trend because realistically to really push back on what is happening and the level of invasion of people's privacy by technology, we need regular people. Like, you know, me just shouting from the rooftops is only so useful, and obviously we're fighting it in court, but that alone is not winning the day for everything. There's just so much. But if there's the general public actually is fighting back, I think that's a good sign.

Thanks so much to Jerome Greco for speaking with me!

Disclaimer: Events, jobs, etc. are not endorsements!

Upcoming events

March 5-7 (NYC): NYU-2026 PIT-UN Tech for Change Urban Informatics for Safe, Just, and Thriving Communities Hackathon, NYU Tandon School of Engineering

March 10 (Michigan, virtual): DISCO Network Presents - Against Surveillance & Spectacle, University of Michigan Digital Studies Institute

March 10-11 (Austin): HBCU AI Conference & Training Summit 2026

March 10-13 (Detroit, virtual): The Nonprofit Technology Conference

March 11 (virtual): Digital Governance for Democratic Renewal: Platform Ownership and the Politics of Online Content Governance, Columbia World Projects

March 27 (NYC): Open Source Social, Nava

March 28-29 (NYC): NYC School of Data, BetaNYC

Jobs and funding opportunities

Director of Engineering, Data, ACLU

Student Research Assistant, Knight-Georgetown Institute

Director of Advocacy Communications, The Internet Society

Tarbell Center AI Reporting Grants

Liked what you read? Subscribe!